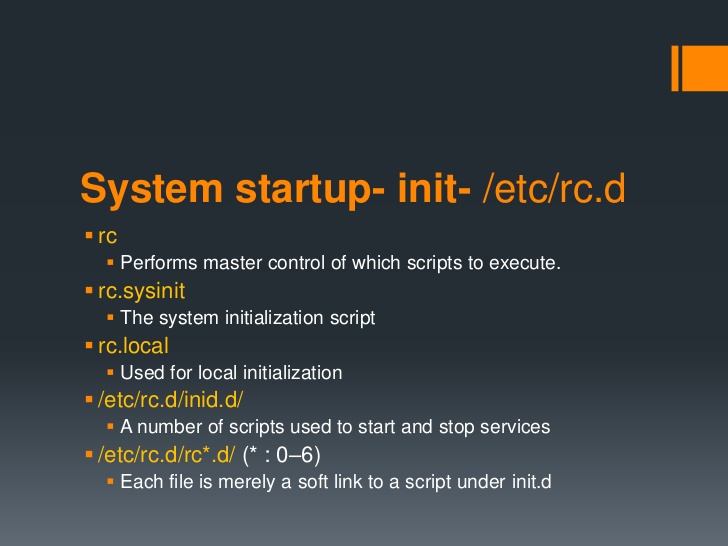

If you have installed a newer version of Debian GNU / Linux such as Debian Jessie or Debian 9 Stretch or Ubuntu 16 Xenial Xerus either on a server or on a personal Desktop laptop and you want tto execute a number of extra commands next to finalization of system boot just like we GNU / Linux users used to do already for the rest 25+ years you will be surprised that /etc/rc.local is no longer available (file is completely missing!!!).

This kind of behaviour (to avoid use of /etc/rc.local and make the file not present by default right after Linux OS install) was evident across many RedHack (Redhat) distributions such as Fedora and CentOS Linux for the last number of releases and the tendency was to also happen in Debian based distros too as it often does, however there was a possibility on this RPM based distros as well as rest of Linux distros to have the /etc/rc.local manually created to work around the missing file.

But NOoooo, the smart new generation GNU / Linux architects with large brains decided to completely wipe out the execution on Linux boot of /etc/rc.local from finalization stage, SMART isn't it??

For instance If you used to eat certain food for the last 25+ years and they suddenly prohibit you to eat it because they say this is not necessery anymore how would you feel?? Crazy isn't it??

Yes I understand the idea to wipe out /etc/rc.local did have a reason as the developers are striving to constanly improve the boot speed process (and the introduction of systemd (system and service manager) in Debian 8 Jessie over the past years did changed significantly on how Linux boots (earlier used SysV boot and LSB – linux standard based init scripts), but come on guys /etc/rc.local

doesn't stone the boot process with minutes, including it will add just 2, 3 seconds extra to boot runtime, so why on earth did you decided to remove it??

What I really loved about Linux through the years was the high level of consistency and inter-operatibility, most things worked just the same way across distributions and there was some logic upgrade, but lately this kind of behaviour is changing so in many of the new things in both GUI and text mode (console) way to interact with a GNU / Linux PC all becomes messy sadly …

So the smart guys who develop Gnu / Linux distros said its time to depreciate /etc/rc.local to prevent the user to be able to execute his set of finalization commands at the end of each booted multiuser runlevel.

The good news is you can bring back (resurrect) /etc/rc.local really easy:

To so, just execute the following either in Physical /dev/tty Console or in Gnome-Terminal (for GNOME users) or for KDE GUI environment users in KDE's terminal emulator konsole:

cat <<EOF >/etc/rc.local

#!/bin/sh -e

#

# rc.local

#

# This script is executed at the end of each multiuser runlevel.

# Make sure that the script will "exit 0" on success or any other

# value on error.

#

# In order to enable or disable this script just change the execution

# bits.

#

# By default this script does nothing.

exit 0

EOF

chmod +x /etc/rc.local

systemctl start rc-local

systemctl status rc-local

I think above is self-explanatory /etc/rc.local file is being created and then to enable it we run systemctl start rc-local and then to check the just run rc-local service status systemctl status

You will get an output similar to below:

root@jericho:/home/hipo# systemctl start rc-local

root@jericho:/home/hipo# systemctl status rc-local

● rc-local.service – /etc/rc.local Compatibility

Loaded: loaded (/lib/systemd/system/rc-local.service; static; vendor preset:

Drop-In: /lib/systemd/system/rc-local.service.d

└─debian.conf

Active: active (exited) since Mon 2017-09-11 13:15:35 EEST; 6s ago

Process: 5008 ExecStart=/etc/rc.local start (code=exited, status=0/SUCCESS)

Tasks: 0 (limit: 4915)

CGroup: /system.slice/rc-local.service

sep 11 13:15:35 jericho systemd[1]: Starting /etc/rc.local Compatibility…

setp 11 13:15:35 jericho systemd[1]: Started /etc/rc.local Compatibility.

To test /etc/rc.local is working as expected you can add to print any string on boot, right before exit 0 command in /etc/rc.local

you can add for example:

echo "YES, /etc/rc.local IS NOW AGAIN WORKING JUST LIKE IN EARLIER LINUX DISTRIBUTIONS!!! HOORAY !!!!";

On CentOS 7 and Fedora 18 codename (Spherical Cow) or other RPM based Linux distro if /etc/rc.local is missing you can follow very similar procedures to have it enabled, make sure

/etc/rc.d/rc.local

is existing

and /etc/rc.local is properly symlined to /etc/rc.d/rc.local

Also don't forget to check whether /etc/rc.d/rc.local is set to be executable file with ls -al /etc/rc.d/rc.local

If it is not executable, make it be by running cmd:

chmod a+x /etc/rc.d/rc.local

If file /etc/rc.d/rc.local happens to be missing just create it with following content:

#!/bin/sh

# Your boot time rc.commands goes somewhere below and above before exit 0

exit 0

That's all folks rc.local not working is solved,

enjoy /etc/rc.local working again 🙂