Thanks God, we have just completed (6 months) Migration few Tomcat and TomEE application servers for PG / PP and Scorpion instances from old environment to a new one for a customer.

Though the separate instances of the old environment are being migrated, the overall design of the Current Mode of Operations (CMO) as they use to call it in corporate World and the Future Mode of Operations (FMO) has differences.

The each of applications on old environment is configured to run in Tomcat failover cluster (2 tomcats on 2 separate machines with unique IP addresses are running) and Apache Reverse Proxy is being used with BalanceMember apache directive in order to drop requests to Tomcat cluster to Tomcat node1 and node2. On the new environment however by design the Tomcat cluster is removed and the application request has to be served by single Tomcat instance.

The migration completed fine and in the beginning in the first day (day 1) and day 2 since the environment went in Production and went through the so-called "GoLive", as called in Corporate World- which is a meathor for launching the application to be used as a production environment for customer, the customer reported TimeOut issues.

Some of the requests according to their report would took up to 4 minutes to serve, after a bit of investigation we found out, that though the environment was moved to one Tomcat the (number) amount of connections to application of end clients did not change, thus the timeouts were caused by default MaxThreads being reached and, we needed to to obviously raise that number. Here is the old Apache RP config where we had the 2 Tomcats between which the RP was load balancing:

BalancerMember ajp://10.10.10.5:11010 route=node1 connectiontimeout=10 ttl=60 retry=60

BalancerMember ajp://10.10.10.5:11010 route=node2 connectiontimeout=10 ttl=60 retry=60ProxyPass / balancer://pool/ stickysession=JSESSIONID

ProxyPassReverse / balancer://pool/

As we needed a work around, we come to conclusion that we just need to increase Timeout on RP first so on Apache Reverse Proxy we placed following httpd.conf Virtualhost ProxyPass (directive) configs :

ProxyPass / ajp://10.10.10.5:11010/ keepalive=On timeout=30 connectiontimeout=30 retry=20

ProxyPassReverse / ajp://10.10.10.5:11010/ProxyPass / ajp://10.10.10.5:11010/ keepalive=On timeout=30 connectiontimeout=30 retry=20

ProxyPassReverse / ajp://10.10.10.5:11010/

and following Apache Timeout directives options:

Timeout 300

KeepAlive On

MaxKeepAliveRequests 100

KeepAliveTimeout 15

Even though the developer tried to insist that the problem was in Reverse Proxy timeout config, they were wrong as I checked the RP logs and there was no "maximum connections reached" errors..

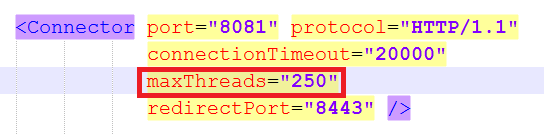

As you could guess what left to check was only Tomcat, after quick evaluation of server.xml, it turned out that the MaxThreads directive on old clustered Tomcats was omitted at all, meaning the default MaxThreads Tomcat value of 200 maximum connections were used, however this was not enough as the client was quering the application with about 350 connections / sec.

The solution was of course to raise the Maxthreads to 400 we were pretty lucky that we already had a good dedicated Linux machine where the application was hosted (16GB Ram, 2 CPUs x 2.67 Ghz), thus raising MaxThreads to 400 was not such a big deal.

Here is the final config we used to fix tomcat timeouts:

<Connector port="11010" address="10.10.10.80" protocol="AJP/1.3" redirectPort="8443" MaxThreads="400" connectionTimeout="300000" keepAliveTimeout="300000" debug="9" />

One note to make here is the debug="9" options to Connector directive was used to increase debug loglevel of Tomcat, and address="" is the local network IP on which Tomcat instance runs. As you see, we choose to use very high connectionTimeouts (because it is crucial, not to cut requests to applications due to timeouts) in case of application slowness.

We also suspected that there are some Oracle (ORA) database queries slowly served on the SQL backend, that might in future cause more app slowness, but this has to be checked seperately further in time as presently we were checking we did not have our Db person present.

More helpful Articles

Tags: apache reverse proxy, Apache Timeout, application, configured, customer, environment, number, RP, slowness, sort