Say you have access to a remote Linux / UNIX / BSD server, i.e. a jump host and you have to remotely access via ssh a bunch of other servers

who have existing IP addresses but the DNS resolver recognized hostnames from /etc/resolv.conf are long and hard to remember by the jump host in /etc/resolv.conf and you do not have a way to include a new alias to /etc/hosts because you don't have superuser admin previleges on the hop station.

To make your life easier you would hence want to add a simplistic host alias to be able to easily do telnet, ssh, curl to some aliased name like s1, s2, s3 … etc.

The question comes then, how can you define the IPs to be resolvable by easily rememberable by using a custom User specific /etc/hosts like definition file?

Expanding /etc/hosts predefined host resolvable records is pretty simple as most as most UNIX / Linux has the HOSTALIASES environment variable

Hostaliases uses the common technique for translating host names into IP addresses using either getaddrinfo(3) or the obsolete gethostbyname(3). As mentioned in hostname(7), you can set the HOSTALIASES environment variable to point to an alias file, and you've got per-user aliases

create ~/.hosts file

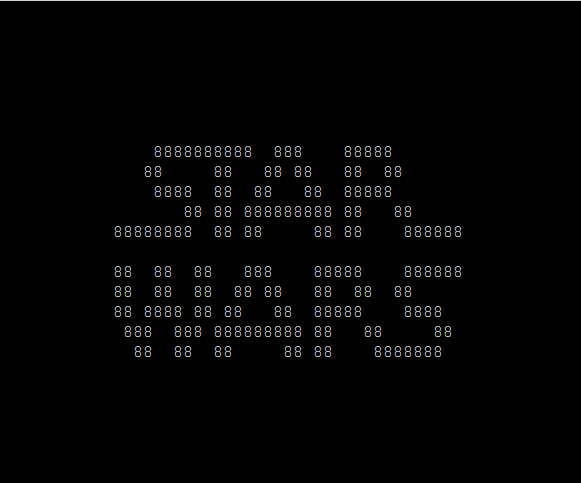

linux:~# vim ~/.hosts

with some content like:

g google.com

localhostg 127.0.0.1

s1 server-with-long-host1.fqdn-whatever.com

s2 server5-with-long-host1.fqdn-whatever.com

s3 server18-with-long-host5.fqdn-whatever.com

…

linux:~# export HOSTALIASES=$PWD/.hosts

The caveat of hostaliases you should know is this will only works for resolvable IP hostnames.

So if you want to be able to access unresolvable hostnames.

You can use a normal alias for the hostname you want in ~/.bashrc with records like:

alias server-hostname="ssh username@10.10.10.18 -v -o stricthostkeychecking=no -o passwordauthentication=yes -o UserKnownHostsFile=/dev/null"

alias server-hostname1="ssh username@10.10.10.19 -v -o stricthostkeychecking=no -o passwordauthentication=yes -o UserKnownHostsFile=/dev/null"

alias server-hostname2="ssh username@10.10.10.20 -v -o stricthostkeychecking=no -o passwordauthentication=yes -o UserKnownHostsFile=/dev/null"

…

then to access server-hostname1 simply type it in terminal.

The more elegant solution is to use a bash script like below:

# include below code to your ~/.bashrc

function resolve {

hostfile=~/.hosts

if [[ -f “$hostfile” ]]; then

for arg in $(seq 1 $#); do

if [[ “${!arg:0:1}” != “-” ]]; then

ip=$(sed -n -e "/^\s*\(\#.*\|\)$/d" -e "/\<${!arg}\>/{s;^\s*\(\S*\)\s*.*$;\1;p;q}" "$hostfile")

if [[ -n “$ip” ]]; then

command "${FUNCNAME[1]}" "${@:1:$(($arg-1))}" "$ip" "${@:$(($arg+1)):$#}"

return

fi

fi

done

fi

command "${FUNCNAME[1]}" "$@"

}function ping {

resolve "$@"

}function traceroute {

resolve "$@"

}function ssh {

resolve "$@"

}function telnet {

resolve "$@"

}function curl {

resolve "$@"

}function wget {

resolve "$@"

}

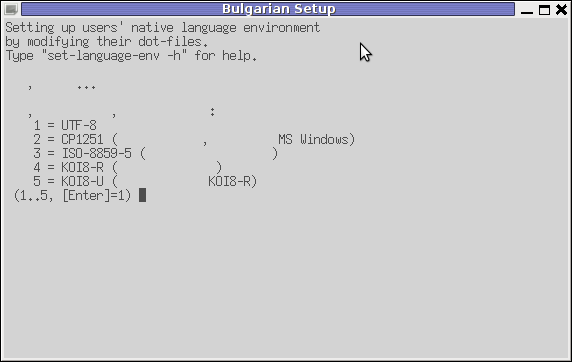

Now after reloading bash login session $HOME/.bashrc with:

linux:~# source ~/.bashrc

ssh / curl / wget / telnet / traceroute and ping will be possible to the defined ~/.hosts IP addresses just like if it have been defined global wide on System in /etc/hosts.

Enjoy