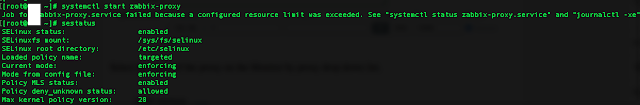

If you have to install Zabbix client that has to communicate towards Zabbix server via a Zabbix Proxy you might be unpleasently surprised that it cannot cannot be start if the selinux mode is set to Enforcing.

Error message like on below screenshot will be displayed when starting proxy client with systemctl.

In the zabbix logs you will see error messages such as:

"cannot set resource limit: [13] Permission denied, CentOS 7"

29085:20160730:062959.263 Starting Zabbix Agent [Test host]. Zabbix 3.0.4 (revision 61185).

29085:20160730:062959.263 **** Enabled features ****

29085:20160730:062959.263 IPv6 support: YES

29085:20160730:062959.263 TLS support: YES

29085:20160730:062959.263 **************************

29085:20160730:062959.263 using configuration file: /etc/zabbix/zabbix_agentd.conf

29085:20160730:062959.263 cannot set resource limit: [13] Permission denied

29085:20160730:062959.263 cannot disable core dump, exiting…

Next step to do is to check whether zabbix is listed in selinux's enabled modules to do so run:

[root@centos ~ ]# semodules -l

…

…..

vhostmd 1.1.0

virt 1.5.0

vlock 1.2.0

vmtools 1.0.0

vmware 2.7.0

vnstatd 1.1.0

vpn 1.16.0

w3c 1.1.0

watchdog 1.8.0

wdmd 1.1.0

webadm 1.2.0

webalizer 1.13.0

wine 1.11.0

wireshark 2.4.0

xen 1.13.0

xguest 1.2.0

xserver 3.9.4

zabbix 1.6.0

zarafa 1.2.0

zebra 1.13.0

zoneminder 1.0.0

zosremote 1.2.0

[root@centos ~ ]# sestatus

# sestatusSELinux status: enabled

SELinuxfs mount: /sys/fs/selinux

SELinux root directory: /etc/selinux

Loaded policy name: targeted

Current mode: enforcing

Mode from config file: enforcing

Policy MLS status: enabled

Policy deny_unknown status: allowed

Max kernel policy version: 28

To get exact zabbix IDs that needs to be added as permissive for Selinux you can use ps -eZ like so:

[root@centos ~ ]# ps -eZ |grep -i zabbix

system_u:system_r:zabbix_agent_t:s0 1149 ? 00:00:00 zabbix_agentd

system_u:system_r:zabbix_agent_t:s0 1150 ? 00:04:28 zabbix_agentd

system_u:system_r:zabbix_agent_t:s0 1151 ? 00:00:00 zabbix_agentd

system_u:system_r:zabbix_agent_t:s0 1152 ? 00:00:00 zabbix_agentd

system_u:system_r:zabbix_agent_t:s0 1153 ? 00:00:00 zabbix_agentd

system_u:system_r:zabbix_agent_t:s0 1154 ? 02:21:46 zabbix_agentd

As you can see zabbix is enabled and hence selinux enforcing mode is preventing zabbix client / server to operate and communicate normally, hence to make it work we need to change zabbix agent and zabbix proxy to permissive mode.

Setting selinux for zabbix agent and zabbix proxy to permissive mode

If you don't have them installed you might neet the setroubleshoot setools, setools-console and policycoreutils-python rpms packs (if you have them installed skip this step).

[root@centos ~ ]# yum install setroubleshoot.x86_64 setools.x86_64 setools-console.x86_64 policycoreutils-python.x86_64

Then to add zabbix service to become permissive either run

[root@centos ~ ]# semanage permissive –add zabbix_t

[root@centos ~ ]# semanage permissive -a zabbix_agent_t

In some cases you might also need in case if just adding the permissive for zabbix_agent_t try also :

setsebool -P zabbix_can_network=1

Next try to start zabbox-proxy and zabbix-agent systemd services

[root@centos ~ ]# systemctl start zabbix-proxy.service

…

[root@centos ~ ]# systemctl start zabbix-agent.service

…

Hopefully all should report fine with the service checking the status should show you something like:

[root@centos ~ ]# systemctl status zabbix-agent

● zabbix-agent.service – Zabbix Agent

Loaded: loaded (/usr/lib/systemd/system/zabbix-agent.service; enabled; vendor preset: disabled)

Active: active (running) since Thu 2021-06-24 07:47:42 CEST; 1 weeks 5 days ago

Main PID: 1149 (zabbix_agentd)

CGroup: /system.slice/zabbix-agent.service

├─1149 /usr/sbin/zabbix_agentd -c /etc/zabbix/zabbix_agentd.conf

├─1150 /usr/sbin/zabbix_agentd: collector [idle 1 sec]

├─1151 /usr/sbin/zabbix_agentd: listener #1 [waiting for connection]

├─1152 /usr/sbin/zabbix_agentd: listener #2 [waiting for connection]

├─1153 /usr/sbin/zabbix_agentd: listener #3 [waiting for connection]

└─1154 /usr/sbin/zabbix_agentd: active checks #1 [idle 1 sec]

Check the Logs finally to make sure all is fine with zabbix being allowed by selinux.

[root@centos ~ ]# grep zabbix_proxy /var/log/audit/audit.log

…

[root@centos ~ ]# tail -n 100 /var/log/zabbix/zabbix_agentd.log

If no errors are in and you receive and you can visualize the usual zabbix collected CPU / Memory / Disk etc. values you're good, Enjoy ! 🙂