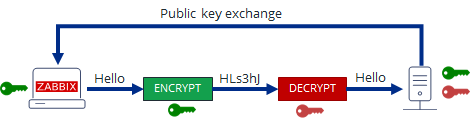

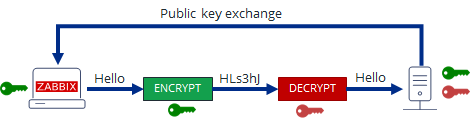

Those concerned of security and in use of their Zabbix monitored data who communicate Zabbix collected agent

data over internet or via some kind of untrusted network might definitely not enjoy the fact that zabbix-agent sents

its collected data to server in a plain text. Clear text data is allowing any network sniffer to possibly collect your

monitored server and hardware devices data and exposes all data sent over the network to same problems like in the past

the old uencrypted SMTP protocol.

To mitigate those great security hole for the paranoid sys admin it is rather easy to enable PSK (Pre Shared Key) based encryption.

To generate Pre Shared key you have to had to important values present

1. PSK Identity

2. PSK Secret

PSK secret should be minimum of 128 bit (16-byte PSK, entered as 32 hexadecimal digits), and supports up to

2048 bit (256-byte PSK, entered as 512 hexadecimal digits)

Usually something like 256 bit PSK secret on the machine should be strong enough and simply generated by running

# openssl rand -hex 32

1. Agent to zabbix server or proxy connection config

In /etc/zabbix/zabbix_agentd.conf for a Server Active (e.g. server to actively request the client to sent its collected data)

On machine running zabbix-agent should have a configuration similar to:

# cat /etc/zabbix/zabbix_agentd.conf

PidFile=/var/run/zabbix/zabbix_agentd.pid

LogFile=/var/log/zabbix/zabbix_agentd.log

LogFileSize=0

# IP of the machine

SourceIP=10.10.10.30

# turn it on if you need to execute to remote machine commands

EnableRemoteCommands=0

# IP of the server

servers=10.30.50.80

ListenPort=10080

# IP of the machine

ListenIP=10.30.30.31

# IP of the server

ServerActive=10.30.50.80

HostMetadataItem=system.uname

BufferSize=5400

MaxLinesPerSecond=5

Timeout=10

AllowRoot=0

StartAgents=5

LogRemoteCommands=0

# Machine hostname

Hostname=fqdn-of-zabbix-data-collect-server.com

Include=/etc/zabbix/zabbix_agentd.d/*.conf

# Encryption

TLSConnect=psk

TLSAccept=psk

TLSPSKIdentity=PSK to Zabbix Server5

TLSPSKFile=/etc/zabbix/zabbix_agentd.psk

! Important security note

!!! The TLSPSKIdentity value you decide will not be encrypted on transport, so don't use anything sensitive.

Once you include the TSL config

2 Generate / Create Zabbix Agent Key

Generate the key with pseudo-random bites inside /etc/zabbix/zabbix_agentd_key.psk

# cd /etc/zabbix

# openssl rand -hex 32 > zabbix_agentd_key.psk

# chown zabbix:zabbix zabbix_agentd_key.psk

# chmod 600 zabbix_agentd_key.psk

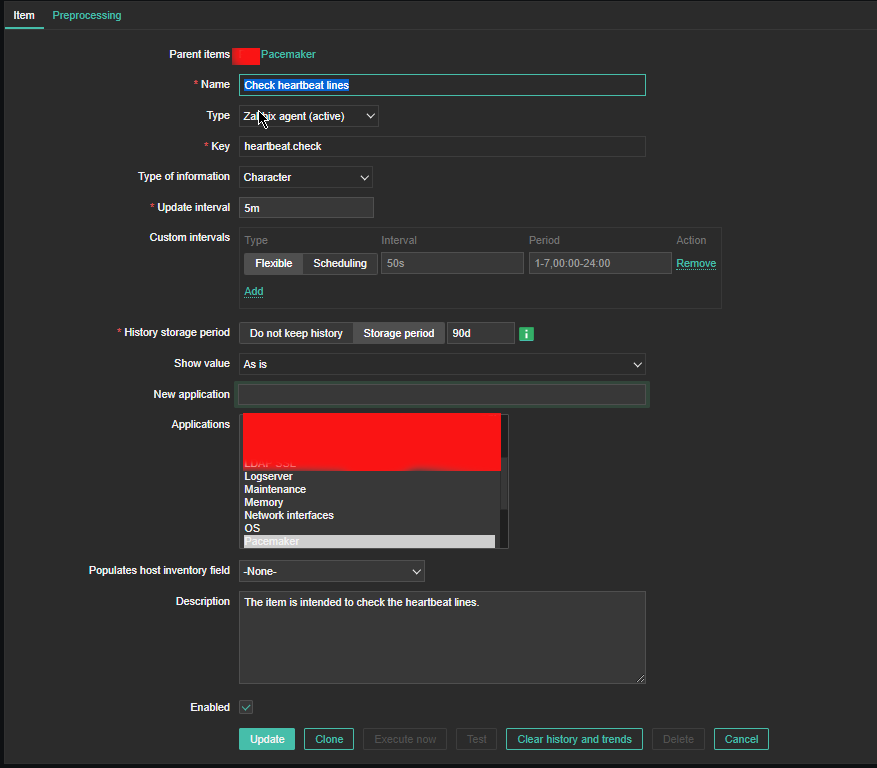

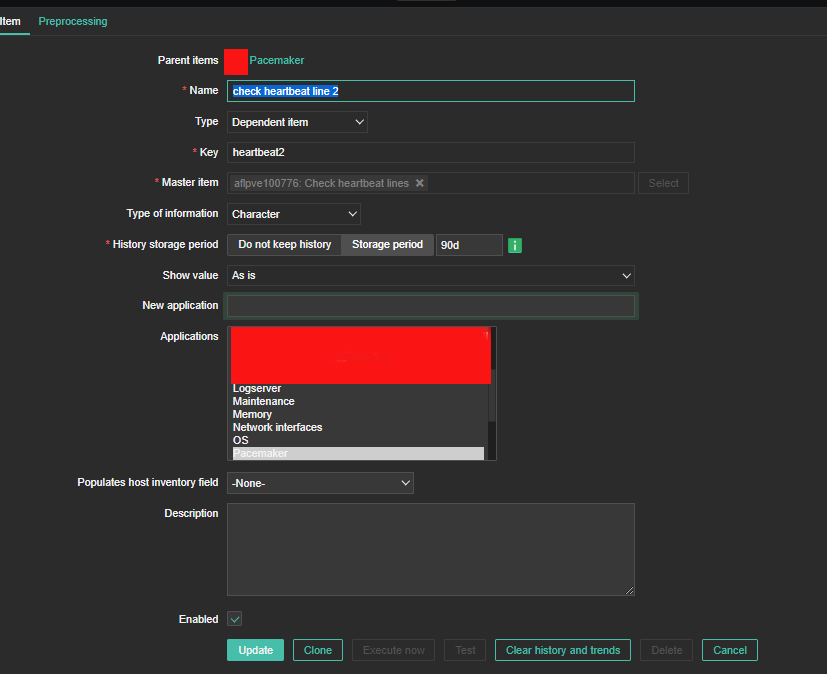

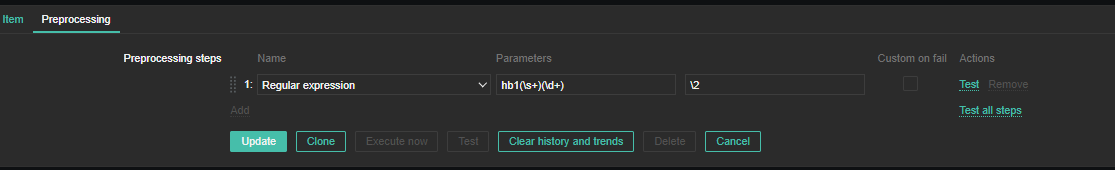

3. Configure PSK encryption in Zabbix Server Web User interface

Go to Zabbix Server User interface in browser and configure the PSK encryption options for the host.

Select the:

'Connections to host' = PSK

'Connections from host' = PSK

'PSK Identity' = [public-value-configured-in-Zabbix-agent-config]

'PSK' = [paste the long hex string generated from the OpenSSL command above]

In some seconds up to a minute or two the Zabbix Server and Agent will successfully communicate using PSK encryption.

Making the monitored data unreadable in plain text for malignant sniffers hanging in the middle equipment between the zabbix-agent and zabbix-server hosts.

4. PSK encryption behind a Proxy

Many companies, nowadays use zabbix proxy for improvement of network infrastrucutre. For example it is used to offload the zabbix-server when multiple zabbix-agents have to report various datas or to monitor servers and devices that are phyisically in separate networks or data centers (are passing through paranoic built firewalls) or monitor locations are having unreliable communications between each other.

To enable PSK for communications between your Zabbix Server and Zabbix Proxy.

1. Create a new secret, and add the PSK Identity and Secret to

Administration ⇾ Proxies ⇾ [Your proxy] ⇾ Encryption

2. Adjust the settings inside the zabbix proxies configuration file at /etc/zabbix/zabbix_proxy.conf

If setting up PSK encryption for agents behind a Zabbix proxy, ensure your have

Zabbix Server ⇽⇾ Proxy PSK enabled

first in Zabbix Server UI.

This is because, when you start the Proxy, or do some testing to send some key value to Zabbix server via the proxy with commands :

# zabbix_get -s 127.0.0.1 -k system.hostname

# zabbix_server -R config_cache_reload

config_cache_reload, the Proxy will download all its host settings from the server, and this also includes the servers copy of the secret.

The proxy needs to know the secret since it is now managing the communications on behalf of the server.

3. To add PSK encryption for any Agents behind a proxy, then you continue to set up the Agents as normal by creating a new secret, editing

Configuration ⇾ Hosts ⇾ [Your Host] ⇾ Encryption page

and also editing /etc/zabbix/zabbix_agentd.conf.

Remember that, since your Agents Host configuration in the Zabbix UI will be set as Monitored by Proxy, the PSK settings will be applicable for communications happening between the Zabbix Proxy and the Agent that it is monitoring, not between the Zabbix Server and the Agent behind the proxy.

You can also add PSK Encryption between your Zabbix Proxy and its own local Agent if you want.

You would set its PSK settings in the Proxy Agents host configuration at

Configuration ⇾ Hosts ⇾ [Your proxy] ⇾ Encryption

and modify the settings in the agents on configuration file at /etc/zabbix/zabbix_agentd.conf.

Keep in mind, this is only applicable to communications between the Zabbix Proxy, and its own Agent process.

When setting up PSK encryption for the Zabbix Server, Proxy and Agents, you may see an error in the Proxy logs,

cannot send proxy data to server at "zabbix.your-domain.tld": connection of type "TLS with PSK" is not allowed for proxy "your-proxy".

If you hit this, check that your

Zabbix Server ⇽⇾ Proxy PSK settings

are correct first.

Don't get confused between the Proxies own optional agent process, and its main Proxy process which is required.