I’ve been hired as a consultant recently to solve a small task on a newly bought Xen based dedicated server.

The server had installed on itself SolusVM

The server was a good hard-iron machine running with CentOS Linux with enabled Xen virtualization support.

The Data Center (DC) has provided the client with 4 IP public addresses, whether the machine was assigned to possess only one MAC address!

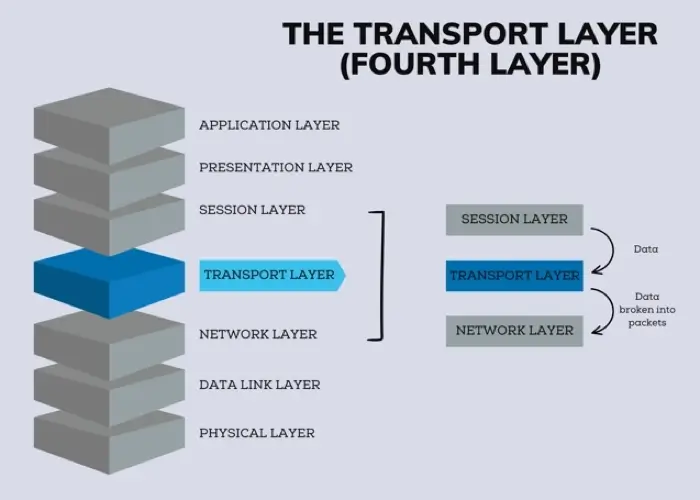

The original idea was the dedicated server is supposed to use 4 of the IP addresses assigned by the DC whether only one of the IPs has an external internet connected ethernet interface with assigned MAC address.

In that case using Xen’s bridging capabilities was pretty much impossible and therefore Xen’s routing mode has to be used, plus an Iptables Network Address Translation or an IP MASQUERADE .

In overall the server would have contained 3 virtual machines inside the Xen installed with 3 copies of:

The scenario I had to deal with is pretty much explained in Xen’s Networking wiki Two Way Routed Network

In this article I will describe as thoroughfully as I can how I configured the server to be able to use the 3 qemu virtual machines (running inside the Xen) with their respective real interner visible public IP addresses.

1. Enable Proxyarp for the eth0 interface

To enable proxyarp for eth0 on boot time and in real time on the server issue the commands:

[root@centos ~]# echo 1 > /proc/sys/net/ipv4/conf/eth0/proxy_arp[root@centos ~]# echo 'net.ipv4.conf.all.proxy_arp = 1' >> /etc/sysctl.conf

2. Enable IP packet forwarding for eth interfaces

This is important pre-requirement in order to make the iptables NAT to work.

[root@centos ~]# echo 'net.ipv4.ip_forward = 1' >> /etc/sysctl.conf

[root@centos ~]# echo 'net.ipv6.conf.all.forwarding=1' >> /etc/sysctl.conf

If you get errors during execution of /etc/init.d/xendomains , like for example:

[root@centos ~]# /etc/init.d/xendomains restart

/etc/xen/scripts/network-route: line 29: /proc/sys/net/ipv4/conf/eth0/proxy_arp: No such file or directory

/etc/xen/scripts/network-route: line 29: /proc/sys/net/ipv6/conf/eth0/proxy_arp: No such file or directory

in order to get rid of the message you will have to edit /etc/xen/scripts/network-route and comment out the lines:

echo 1 >/proc/sys/net/ipv4/conf/${netdev}/proxy_arp

echo 1 > /proc/sys/net/ipv6/conf/eth0/proxy_arp

e.g.

#echo 1 >/proc/sys/net/ipv4/conf/${netdev}/proxy_arp

#echo 1 > /proc/sys/net/ipv6/conf/eth0/proxy_arp

3. Edit /etc/xen/xend-config.sxp, disable ethernet bridging and enable eth0 routing (route mode) and NAT for Xen’s routed mode

Make absolutely sure that in /etc/xen/xend-config.sxp the lines related to bridging are commented.

The lines you need to comment out are:

(network-script network-bridge)

(vif-script vif-bridge)

make them look like:

#(network-script network-bridge)

#(vif-script vif-bridge)br />

Now as bridging is disabled let’s enable Xen routed network traffic as an bridged networking alternative.

Find the commented (network-script network-route) and (vif-script vif-route) lines and uncomment them:

#(network-script network-route)

#(vif-script vif-route)

The above commented lines should become:

(network-script network-route)

(vif-script vif-route)

Next step is to enable NAT for routed traffic in Xen (necessery to make routed mode work).

Below commented two lines in /etc/xen/xend-config.sxp, should be uncommented e.g.:

#(network-script network-nat)

#(vif-script vif-nat)

Should become:

(network-script network-nat)

(vif-script vif-nat)

4. Restart Xen control daemon and reload installed Xen’s Virtual Machines installed domains

To do so invoke the commands:

[root@centos ~]# /etc/init.d/xend

[root@centos ~]# /etc/init.d/xendomains restart

This two commands will probably take about 7 to 10 minutes (at least they took this serious amount of time in my case).

If you think this time is too much to speed-up the procedure of restarting Xen and qemu attached virtual machines, restart the whole Linux server, e.g.:

[root@centos ~]# restart

5. Configure iptables NAT rules on the CentOS host

After the server boots up, you will have to initiate the following ifconfig & iptables rules in order to make the Iptables NAT to work out:

echo > > /proc/sys/net/ipv4/conf/tap1.0/proxy_arp

/sbin/ifconfig eth0:1 11.22.33.44 netmask 255.255.252.0

/sbin/ifconfig eth0:2 22.33.44.55 netmask 255.255.252.0

/sbin/ifconfig eth0:3 33.44.55.66 netmask 255.255.252.0

/sbin/iptables -t nat -A PREROUTING -d 11.22.33.44 -i eth0 -j DNAT --to-destination 192.168.1.2

/sbin/iptables -t nat -A PREROUTING -d 22.33.44.55 -i eth0 -j DNAT --to-destination 192.168.1.3

/sbin/iptables -t nat -A PREROUTING -d 33.44.55.66 -i eth0 -j DNAT --to-destination 192.168.1.4

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.2 -o eth0 -j SNAT --to-source 11.22.33.44

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.3 -o eth0 -j SNAT --to-source 22.33.44.55

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.4 -o eth0 -j SNAT --to-source 33.44.55.66

In the above ifconfig and iptables rules the IP addresses:

11.22.33.44, 22.33.44.55, 33.44.55.66 are real IP addresses visible from the Internet.

In the above rules eth0:1, eth0:2 and eth0:3 are virtual ips assigned to the main eth0 interface.

This ifconfig and iptables setup assumes that the 3 Windows virtual machines running inside the Xen dedicated server will be configured to use (local) private network IP addresses:

192.168.1.2, 192.168.1.3 and 192.168.1.4

You will have also to substitute the 11.22.33.44, 22.33.44.55 and 33.44.55.66 with your real IP addreses.

To store the iptables rules permanently on the fedora you can use the iptables-save command:

[root@centos ~]# /sbin/iptables-save

However I personally did not use this approach to save my inserserted iptable rules for later boots but I use my small script set_ips.sh to add virtual interfaces and iptables rules via the /etc/rc.local invokation:

If you like the way I have integrated my virtual eths initiation and iptables kernel firewall inclusion, download my script and set it to run in /etc/rc.local, like so:

[root@centos ~]# cd /usr/sbin

[root@centos sbin]# wget https://www.pc-freak.net/bshscr/set_ips.sh

...

[root@centos ~]# chmod +x /usr/sbin/set_ips.sh

[root@centos ~]# mv set_ips.sh /usr/sbin

[root@centos ~]# echo '/usr/sbin/set_ips.sh' >> /etc/rc.local

Note that you will have to modify my set_ips.sh script to substitute the 11.22.33.44, 22.33.44.55 and 33.44.55.66 with your real IP address.

So far so good, one might think that all this should be enough for the Virtual Machines Windows hosts to be able to connect to the Internet and Internet requests to the virtual machines to arrive, but no it’s not!!

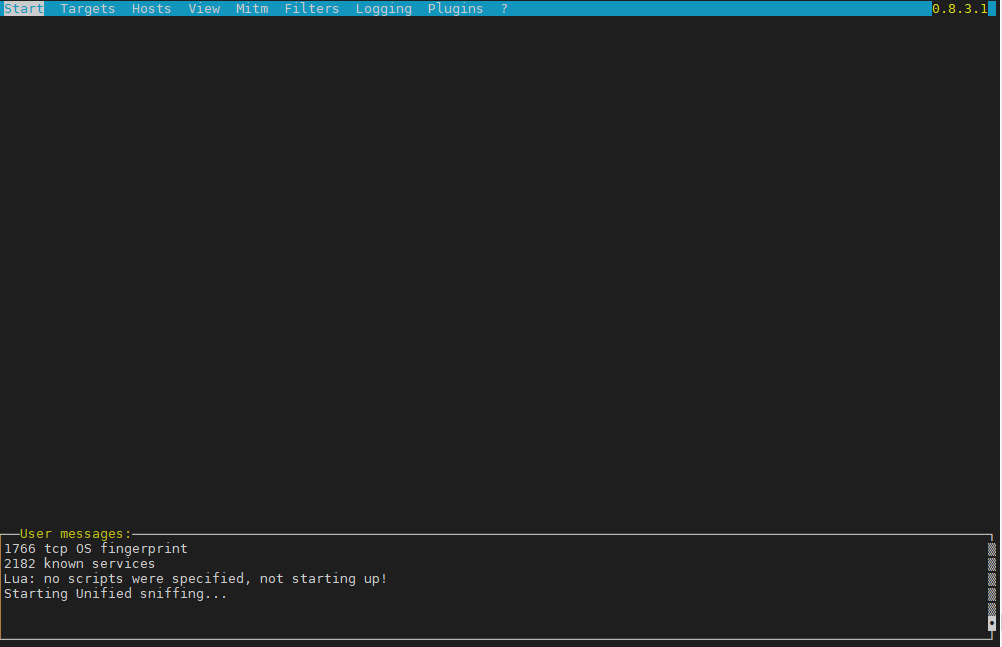

6. Debugging Limited Connectivity Windows LAN troubles on the Xen dedicated server

Even though the iptables rules were correct and the vif route and vif nat was enabled inside the Xen node, as well as everything was correctly configured in the Windows 2008 host Virtual machines, the virtual machines’s LAN cards were not able to connect properly to connect to the internet and the Windows LAN interface kept constantly showing Limited Connectivity! , neither a ping was available to the gateway configured for the Windows VM host (which in my case was: 192.168.1.1).

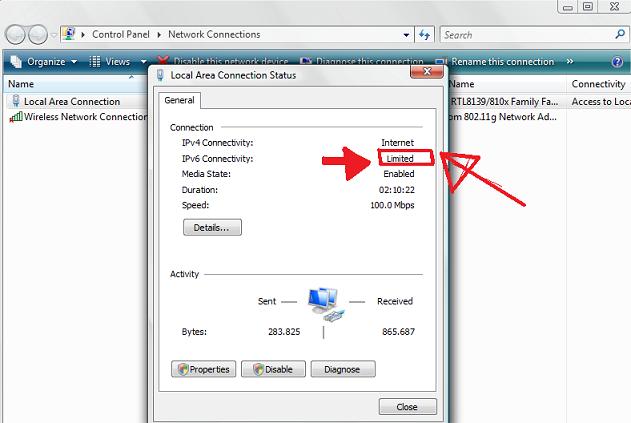

You see the error with Limited connectivity inside the Windows on below’s screenshot:

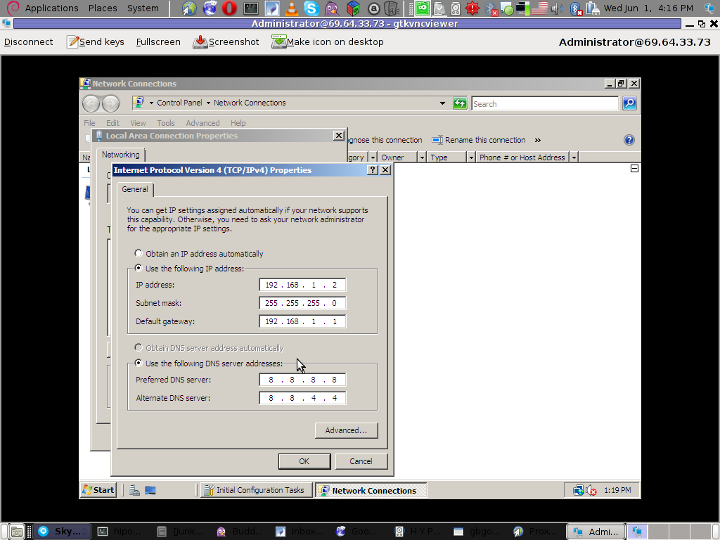

Here is also a screenshot of my VNC connection to the Virtual machine with the correct IP settings – (TCP/IPv4) Properties Window:

This kind of Limited Connectivity VM Windows error was really strange and hard to diagnose, thus I started investigating what is wrong with this whole situation and why is not able the Virtualized Windows to connect properly to the Internet, through the Iptables NAT inbound and outbound traffic redirection.

To diagnose the problem, I started up with listing the exact network interfaces showing to be on the Xen Dedicated server:

[root@centos ~]# /sbin/ifconfig |grep -i 'Link encap' -A 1

eth0 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:111.22.33.55 Bcast:111.22.33.255

Mask:255.255.252.0

--

eth0:1 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:11.22.33.44 Bcast:11.22.33.255

Mask:255.255.252.0

--

eth0:2 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:22.33.44.55 Bcast:22.33.44.255

Mask:255.255.252.0

--

eth0:3 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:33.44.55.66 Bcast:33.44.55.255

Mask:255.255.252.0

--

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

--

tap1.0 Link encap:Ethernet HWaddr FA:07:EF:CA:13:31

--

vifvm101.0 Link encap:Ethernet HWaddr FE:FF:FF:FF:FF:FF

inet addr:111.22.33.55 Bcast:111.22.33.55

Mask:255.255.255.255

I started debugging the issue, using the expelling logic.

In the output concerning my interfaces via ifconfig on eth0, I have my primary server IP address 111.22.33.55 , this one is working for sure as I was currently connected to the server through it.

The other virtual IP addresses assigned on the virtual network interfaces eth0:1, eth0:2 and eth0:3 were also assigned correctly as I was able to ping this ips from my Desktop machine from the Internet.

The lo , interface was also properly configured as I could ping without a problem the loopback ip – 127.0.0.1

The rest of the interfaces displayed by my ifconfig output were: tap1.0, vifvm101.0

After a bit of ressearch, I’ve figured out that they’re virtual interfaces and they belong to the Xen domains which are running qemu virtual machines with the Windows host.

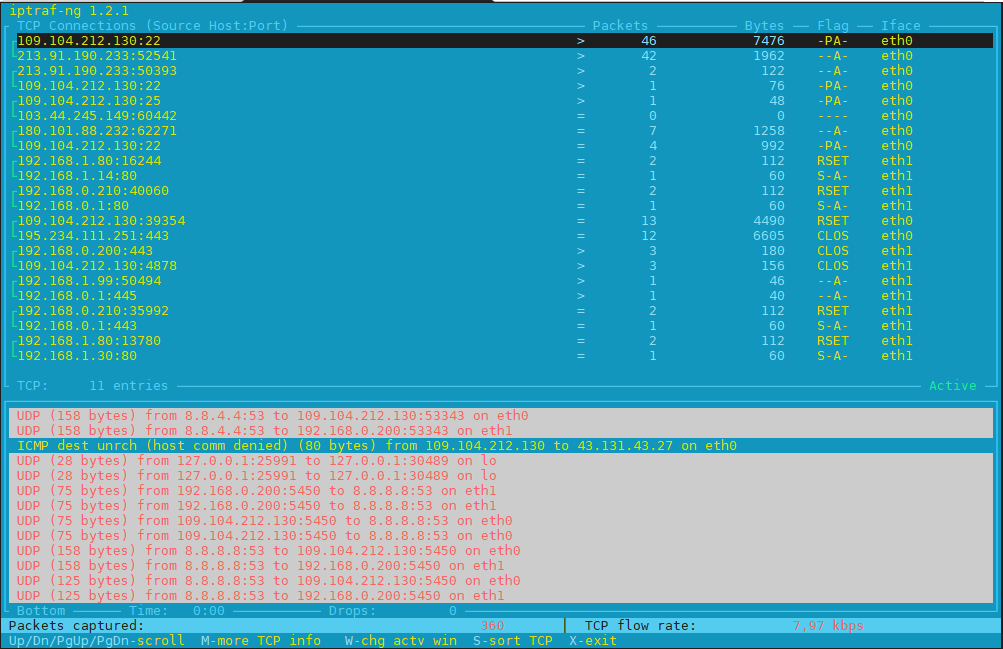

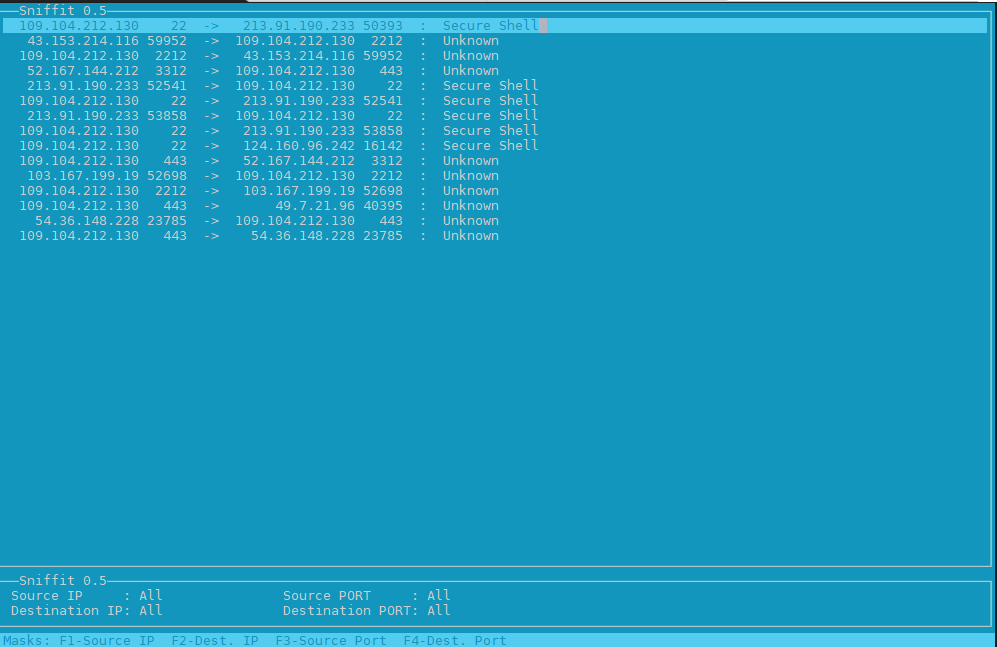

I used tcpdump to debug what kind of traffic does flow through the tap1.0 and vifvm101.0 interfaces, like so

[root@centos ~]# tcpdump -i vifvm101.0

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on vifvm101.0, link-type EN10MB (Ethernet), capture size 96 bytes

^C

0 packets captured

0 packets received by filter

0 packets dropped by kernel

[root@centos ~]# tcpdump -i tap1.0

cpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on tap1.0, link-type EN10MB (Ethernet), capture size 96 bytes

^C

08:55:52.490249 IP 229.197.34.95.customer.cdi.no.15685 > 192.168.1.2.12857: UDP, length 42

I’ve figured out as it’s also observable in above’s two tcpdump commands output, that nothing flows through the vifvm101.0 interface, and that there was some traffic passing by tap1.0 interface.

7. Solving the Limited Connectivy Windows Internet network connection problems

As below’s ifconfig output reveals, there is no IP address assigned to tap1.0 interface, using some guidelines and suggestions from guys in irc.freenode.net’s #netfilter irc channel, I’ve decided to give a go to set up an IP address of 192.168.1.1 to tap1.0 .

I choose for a reason as this IP address is configured to be my Gateway’s IP Address inside the Emulated Windows 2008 hosts

To assign the 192.168.1.1 to tap1.0, I issued:

[root@centos ~]# /sbin/ifconfig tap1.0 192.168.1.1 netmask 255.255.255.0

To test if there is difference I logged in to the Virtual Machine host with gtkvncviewer (which by the way is a very nice VNC client for Gnome) and noticed there was an established connection to the internet inside the Virtual Machine 😉

I issued a ping to google which was also returned and opened a browser to really test if everything is fine with the Internet.

Thanks God! I could browse and everything was fine 😉

8. Making tap1.0 192.168.1.1 (VM hosts gateway to be set automatically, each time server reboots)

After rebooting the server the tap1.0 assignmend of 192.168.1.1 disappeared thus I had to make the 192.168.1.1, be assigned automatically each time the CentoS server boots.

To give it a try, I decided to place /sbin/ifconfig tap1.0 192.168.1.1 netmask 255.255.255.0 into /etc/rc.local, but this worked not as the tap1.0 interface got initialized a while after all the xendomains gets initialized.

I tried few times to set some kind of sleep time interval with the sleep , right before the /sbin/ifconfig tap1.0 … ip initialization but this did not worked out, so I finally completely abandoned this methodology and make the tap1.0 get initialized with an IP through a cron daemon.

For that purpose I’ve created a script to be invoked, every two minutes via cron which checked if the tap1.0 interface is up and if not issues the ifconfig command to initialize the interface and assign the 192.168.1.1 IP to it.

Here is my set_tap_1_iface.sh shell script

To set it up on your host in /usr/sbin issue:

[root@centos ~]# cd /usr/sbin/

[root@centos sbin]# wget https://www.pc-freak.net/bshscr/set_tap_1_iface.sh

...

In order to set it on cron to make the tap1.0 initialization automatically every two minutes use the cmd:

[root@centos ~]# crontab -u root -e

After the cronedit opens up, place the set_tap_1_iface.sh cron invokation rules:

*/2 * * * * /usr/sbin/set_tap_1_iface.sh >/dev/null 2>&1

and save.

That’s all now your Xen dedicated and the installed virtual machines with their public internet IPs will work 😉

If this article helped you to configure your NAT routing in Xen drop me a thanks message, buy me a beer or hire me! Cheers 😉

How to configure and enable Xen Linux dedicated server’s Virtual machines Internet to work / Enable multipe real IPs and one MAC only in (SolusVM) through NAT routed and iptables

Saturday, June 4th, 2011I’ve been hired as a consultant recently to solve a small task on a newly bought Xen based dedicated server.

The server had installed on itself SolusVM

The server was a good hard-iron machine running with CentOS Linux with enabled Xen virtualization support.

The Data Center (DC) has provided the client with 4 IP public addresses, whether the machine was assigned to possess only one MAC address!

The original idea was the dedicated server is supposed to use 4 of the IP addresses assigned by the DC whether only one of the IPs has an external internet connected ethernet interface with assigned MAC address.

In that case using Xen’s bridging capabilities was pretty much impossible and therefore Xen’s routing mode has to be used, plus an Iptables Network Address Translation or an IP MASQUERADE .

In overall the server would have contained 3 virtual machines inside the Xen installed with 3 copies of:

The scenario I had to deal with is pretty much explained in Xen’s Networking wiki Two Way Routed Network

In this article I will describe as thoroughfully as I can how I configured the server to be able to use the 3 qemu virtual machines (running inside the Xen) with their respective real interner visible public IP addresses.

1. Enable Proxyarp for the eth0 interface

To enable proxyarp for eth0 on boot time and in real time on the server issue the commands:

[root@centos ~]# echo 1 > /proc/sys/net/ipv4/conf/eth0/proxy_arp[root@centos ~]# echo 'net.ipv4.conf.all.proxy_arp = 1' >> /etc/sysctl.conf

2. Enable IP packet forwarding for eth interfaces

This is important pre-requirement in order to make the iptables NAT to work.

[root@centos ~]# echo 'net.ipv4.ip_forward = 1' >> /etc/sysctl.conf

[root@centos ~]# echo 'net.ipv6.conf.all.forwarding=1' >> /etc/sysctl.conf

If you get errors during execution of /etc/init.d/xendomains , like for example:

[root@centos ~]# /etc/init.d/xendomains restart

/etc/xen/scripts/network-route: line 29: /proc/sys/net/ipv4/conf/eth0/proxy_arp: No such file or directory

/etc/xen/scripts/network-route: line 29: /proc/sys/net/ipv6/conf/eth0/proxy_arp: No such file or directory

in order to get rid of the message you will have to edit /etc/xen/scripts/network-route and comment out the lines:

echo 1 >/proc/sys/net/ipv4/conf/${netdev}/proxy_arp

echo 1 > /proc/sys/net/ipv6/conf/eth0/proxy_arp

e.g.

#echo 1 >/proc/sys/net/ipv4/conf/${netdev}/proxy_arp

#echo 1 > /proc/sys/net/ipv6/conf/eth0/proxy_arp

3. Edit /etc/xen/xend-config.sxp, disable ethernet bridging and enable eth0 routing (route mode) and NAT for Xen’s routed mode

Make absolutely sure that in /etc/xen/xend-config.sxp the lines related to bridging are commented.

The lines you need to comment out are:

(network-script network-bridge)(vif-script vif-bridge)

make them look like:

#(network-script network-bridge)#(vif-script vif-bridge)br />

Now as bridging is disabled let’s enable Xen routed network traffic as an bridged networking alternative.

Find the commented (network-script network-route) and (vif-script vif-route) lines and uncomment them:

#(network-script network-route)

#(vif-script vif-route)

The above commented lines should become:

(network-script network-route)

(vif-script vif-route)

Next step is to enable NAT for routed traffic in Xen (necessery to make routed mode work).

Below commented two lines in /etc/xen/xend-config.sxp, should be uncommented e.g.:

#(network-script network-nat)

#(vif-script vif-nat)

Should become:

(network-script network-nat)

(vif-script vif-nat)

4. Restart Xen control daemon and reload installed Xen’s Virtual Machines installed domains

To do so invoke the commands:

[root@centos ~]# /etc/init.d/xend

[root@centos ~]# /etc/init.d/xendomains restart

This two commands will probably take about 7 to 10 minutes (at least they took this serious amount of time in my case).

If you think this time is too much to speed-up the procedure of restarting Xen and qemu attached virtual machines, restart the whole Linux server, e.g.:

[root@centos ~]# restart

5. Configure iptables NAT rules on the CentOS host

After the server boots up, you will have to initiate the following ifconfig & iptables rules in order to make the Iptables NAT to work out:

echo > > /proc/sys/net/ipv4/conf/tap1.0/proxy_arp/sbin/ifconfig eth0:1 11.22.33.44 netmask 255.255.252.0

/sbin/ifconfig eth0:2 22.33.44.55 netmask 255.255.252.0

/sbin/ifconfig eth0:3 33.44.55.66 netmask 255.255.252.0

/sbin/iptables -t nat -A PREROUTING -d 11.22.33.44 -i eth0 -j DNAT --to-destination 192.168.1.2

/sbin/iptables -t nat -A PREROUTING -d 22.33.44.55 -i eth0 -j DNAT --to-destination 192.168.1.3

/sbin/iptables -t nat -A PREROUTING -d 33.44.55.66 -i eth0 -j DNAT --to-destination 192.168.1.4

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.2 -o eth0 -j SNAT --to-source 11.22.33.44

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.3 -o eth0 -j SNAT --to-source 22.33.44.55

/sbin/iptables -t nat -A POSTROUTING -s 192.168.1.4 -o eth0 -j SNAT --to-source 33.44.55.66

In the above ifconfig and iptables rules the IP addresses:

11.22.33.44, 22.33.44.55, 33.44.55.66 are real IP addresses visible from the Internet.

In the above rules eth0:1, eth0:2 and eth0:3 are virtual ips assigned to the main eth0 interface.

This ifconfig and iptables setup assumes that the 3 Windows virtual machines running inside the Xen dedicated server will be configured to use (local) private network IP addresses:

192.168.1.2, 192.168.1.3 and 192.168.1.4

You will have also to substitute the 11.22.33.44, 22.33.44.55 and 33.44.55.66 with your real IP addreses.

To store the iptables rules permanently on the fedora you can use the iptables-save command:

[root@centos ~]# /sbin/iptables-save

However I personally did not use this approach to save my inserserted iptable rules for later boots but I use my small script set_ips.sh to add virtual interfaces and iptables rules via the /etc/rc.local invokation:

If you like the way I have integrated my virtual eths initiation and iptables kernel firewall inclusion, download my script and set it to run in /etc/rc.local, like so:

[root@centos ~]# cd /usr/sbin

[root@centos sbin]# wget https://www.pc-freak.net/bshscr/set_ips.sh

...

[root@centos ~]# chmod +x /usr/sbin/set_ips.sh

[root@centos ~]# mv set_ips.sh /usr/sbin

[root@centos ~]# echo '/usr/sbin/set_ips.sh' >> /etc/rc.local

Note that you will have to modify my set_ips.sh script to substitute the 11.22.33.44, 22.33.44.55 and 33.44.55.66 with your real IP address.

So far so good, one might think that all this should be enough for the Virtual Machines Windows hosts to be able to connect to the Internet and Internet requests to the virtual machines to arrive, but no it’s not!!

6. Debugging Limited Connectivity Windows LAN troubles on the Xen dedicated server

Even though the iptables rules were correct and the vif route and vif nat was enabled inside the Xen node, as well as everything was correctly configured in the Windows 2008 host Virtual machines, the virtual machines’s LAN cards were not able to connect properly to connect to the internet and the Windows LAN interface kept constantly showing Limited Connectivity! , neither a ping was available to the gateway configured for the Windows VM host (which in my case was: 192.168.1.1).

You see the error with Limited connectivity inside the Windows on below’s screenshot:

Here is also a screenshot of my VNC connection to the Virtual machine with the correct IP settings – (TCP/IPv4) Properties Window:

This kind of Limited Connectivity VM Windows error was really strange and hard to diagnose, thus I started investigating what is wrong with this whole situation and why is not able the Virtualized Windows to connect properly to the Internet, through the Iptables NAT inbound and outbound traffic redirection.

To diagnose the problem, I started up with listing the exact network interfaces showing to be on the Xen Dedicated server:

[root@centos ~]# /sbin/ifconfig |grep -i 'Link encap' -A 1

eth0 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:111.22.33.55 Bcast:111.22.33.255

Mask:255.255.252.0

--

eth0:1 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:11.22.33.44 Bcast:11.22.33.255

Mask:255.255.252.0

--

eth0:2 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:22.33.44.55 Bcast:22.33.44.255

Mask:255.255.252.0

--

eth0:3 Link encap:Ethernet HWaddr 00:19:99:9C:08:3A

inet addr:33.44.55.66 Bcast:33.44.55.255

Mask:255.255.252.0

--

lo Link encap:Local Loopback

inet addr:127.0.0.1 Mask:255.0.0.0

--

tap1.0 Link encap:Ethernet HWaddr FA:07:EF:CA:13:31

--

vifvm101.0 Link encap:Ethernet HWaddr FE:FF:FF:FF:FF:FF

inet addr:111.22.33.55 Bcast:111.22.33.55

Mask:255.255.255.255

I started debugging the issue, using the expelling logic.

In the output concerning my interfaces via ifconfig on eth0, I have my primary server IP address 111.22.33.55 , this one is working for sure as I was currently connected to the server through it.

The other virtual IP addresses assigned on the virtual network interfaces eth0:1, eth0:2 and eth0:3 were also assigned correctly as I was able to ping this ips from my Desktop machine from the Internet.

The lo , interface was also properly configured as I could ping without a problem the loopback ip – 127.0.0.1

The rest of the interfaces displayed by my ifconfig output were: tap1.0, vifvm101.0

After a bit of ressearch, I’ve figured out that they’re virtual interfaces and they belong to the Xen domains which are running qemu virtual machines with the Windows host.

I used tcpdump to debug what kind of traffic does flow through the tap1.0 and vifvm101.0 interfaces, like so

[root@centos ~]# tcpdump -i vifvm101.0

tcpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on vifvm101.0, link-type EN10MB (Ethernet), capture size 96 bytes

^C

0 packets captured

0 packets received by filter

0 packets dropped by kernel

[root@centos ~]# tcpdump -i tap1.0

cpdump: verbose output suppressed, use -v or -vv for full protocol decode

listening on tap1.0, link-type EN10MB (Ethernet), capture size 96 bytes

^C

08:55:52.490249 IP 229.197.34.95.customer.cdi.no.15685 > 192.168.1.2.12857: UDP, length 42

I’ve figured out as it’s also observable in above’s two tcpdump commands output, that nothing flows through the vifvm101.0 interface, and that there was some traffic passing by tap1.0 interface.

7. Solving the Limited Connectivy Windows Internet network connection problems

As below’s ifconfig output reveals, there is no IP address assigned to tap1.0 interface, using some guidelines and suggestions from guys in irc.freenode.net’s #netfilter irc channel, I’ve decided to give a go to set up an IP address of 192.168.1.1 to tap1.0 .

I choose for a reason as this IP address is configured to be my Gateway’s IP Address inside the Emulated Windows 2008 hosts

To assign the 192.168.1.1 to tap1.0, I issued:

[root@centos ~]# /sbin/ifconfig tap1.0 192.168.1.1 netmask 255.255.255.0

To test if there is difference I logged in to the Virtual Machine host with gtkvncviewer (which by the way is a very nice VNC client for Gnome) and noticed there was an established connection to the internet inside the Virtual Machine 😉I issued a ping to google which was also returned and opened a browser to really test if everything is fine with the Internet.

Thanks God! I could browse and everything was fine 😉

8. Making tap1.0 192.168.1.1 (VM hosts gateway to be set automatically, each time server reboots)

After rebooting the server the tap1.0 assignmend of 192.168.1.1 disappeared thus I had to make the 192.168.1.1, be assigned automatically each time the CentoS server boots.

To give it a try, I decided to place /sbin/ifconfig tap1.0 192.168.1.1 netmask 255.255.255.0 into /etc/rc.local, but this worked not as the tap1.0 interface got initialized a while after all the xendomains gets initialized.

I tried few times to set some kind of sleep time interval with the sleep , right before the /sbin/ifconfig tap1.0 … ip initialization but this did not worked out, so I finally completely abandoned this methodology and make the tap1.0 get initialized with an IP through a cron daemon.

For that purpose I’ve created a script to be invoked, every two minutes via cron which checked if the tap1.0 interface is up and if not issues the ifconfig command to initialize the interface and assign the 192.168.1.1 IP to it.

Here is my set_tap_1_iface.sh shell script

To set it up on your host in /usr/sbin issue:

[root@centos ~]# cd /usr/sbin/

In order to set it on cron to make the tap1.0 initialization automatically every two minutes use the cmd:[root@centos sbin]# wget https://www.pc-freak.net/bshscr/set_tap_1_iface.sh

...

[root@centos ~]# crontab -u root -e

After the cronedit opens up, place the set_tap_1_iface.sh cron invokation rules:

*/2 * * * * /usr/sbin/set_tap_1_iface.sh >/dev/null 2>&1

and save.

That’s all now your Xen dedicated and the installed virtual machines with their public internet IPs will work 😉

If this article helped you to configure your NAT routing in Xen drop me a thanks message, buy me a beer or hire me! Cheers 😉

Tags: addr, amount, arp, arpecho, Bcast, boot time, center dc, control, dedicated server, echo 1, eth, execution, external internet, host, ip masquerade, ips, iptables nat, ipv, ipv4, ipv6, iron machine, mac address, memory, Metric, microsoft windows, modeMake, necessery, Netmask, network address translation, POSTROUTING, proxy arp, public addresses, public ip addresses, qemu, Restart, root, screenshot, sxp, time, uncomment, vif, Virtual, virtual machines, work, xend

Posted in Linux, System Administration | 2 Comments »