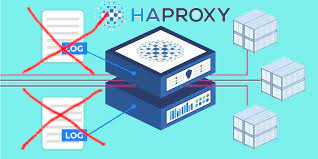

In my previous article I've shortly explained on how it is possible to configure multiple haproxy instances to log in separate log files as well as how to configure a specific frontend to log inside a separate file. Sometimes it is simply unnecessery to keep any kind of log file for haproxy to spare disk space or even for anonymity of traffic. Hence in this tiny article will explain how to disable globally logging for haproxy and how logging for a certain frontend or backend could be stopped.

1. Disable globally logging of haproxy service

Disabling globally logging for haproxy in case if you don't need the log is being achieved by redirecting the log variable to /dev/null handler and to also mute the reoccurring alert, notice and info messages, that are produced in case of some extra ordinary events during start / stop of haproxy or during mising backends etc. you can send those messages to local0 and loca1 handlers which will be discarded later by rsyslogd configuration, for example thsi can be achieved with a configuration like:

global log /dev/log local0 info alert log /dev/log local1 notice alert defaults log global mode http option httplog option dontlognull

<level> is optional and can be specified to filter outgoing messages. By

default, all messages are sent. If a level is specified, only

messages with a severity at least as important as this level

will be sent. An optional minimum level can be specified. If it

is set, logs emitted with a more severe level than this one will

be capped to this level. This is used to avoid sending "emerg"

messages on all terminals on some default syslog configurations.

Eight levels are known :

emerg alert crit err warning notice info debug

By using the log level you can also tell haproxy to omit from logging errors from log if for some reasons haproxy receives a lot of errors and this is flooding your logs, like this:

backend Backend_Interface

http-request set-log-level err

no log

But sometimes you might need to disable it for a single frontend only and comes the question.

2. How to disable logging for a single frontend interface?

I thought that might be more complex but it was pretty easy with the option dontlog-normal haproxy.cfg variable:

Here is sample configuration with frontend and backend on how to instrucruct the haproxy frontend to disable all logging for the frontend

frontend ft_Frontend_Interface

# log 127.0.0.1 local4 debug

bind 10.44.192.142:12345

option dontlog-normal

mode tcp

option tcplogtimeout client 350000

log-format [%t]\ %ci:%cp\ %fi:%fp\ %b/%s:%sp\ %Tw/%Tc/%Tt\ %B\ %ts\ %ac/%fc/%bc/%sc/%rc\ %sq/%bq

default_backend bk_WLP_echo_port_servicebackend bk_Backend_Interface

timeout server 350000

timeout connect 35000

server serverhost1 10.10.192.12:12345 weight 1 check port 12345

server serverhost2 10.10.192.13:12345 weight 3 check port 12345

As you can see from those config, we have also enabled as a check port 12345 which is the application port service if something goes wrong with the application and 12345 is not anymore responding the respective server will get excluded automatically by haproxy and only one of machines will serve, the weight tells it which server will have the preference to serve the traffic the weight ratio will be 1 request will end up on one machine and 3 requests on the other machine.

3. How to disable single configured backend to not log anything but still have a log for the frontend

Omit the use of option dontlog normal from frontend inside the backend just set no log:

backend bk_Backend_Interface

no log

timeout server 350000

timeout connect 35000

server serverhost1 10.10.192.12:12345 weight 1 check port 12345

server serverhost2 10.10.192.13:12345 weight 3 check port 12345

That's all reload haproxy service on the machine and backend will no longer log to your default configured log file via the respective local0 – local6 handler.